For Indian businesses, digital transformation is no longer about experimentation or early adoption. That phase is over. In 2026, transformation is judged by outcomes—speed, efficiency, resilience, and measurable business impact. Organizations that digitized processes over the last few years are now asking harder questions:

- Are we faster than competitors?

- Are our costs under control?

- Can we scale without chaos?

- Are we ready for uncertainty—economic, regulatory, or operational?

The companies that answer “yes” are not just using digital tools; they are aligning technology with strategy. This blog explores the most important digital transformation trends Indian businesses must pay attention to in 2026—not hype-driven ideas, but trends that are actively shaping how organizations operate, compete, and grow.

Top 8 Digital Transformation Trends

1. AI Moving from “Innovation” to Everyday Operations

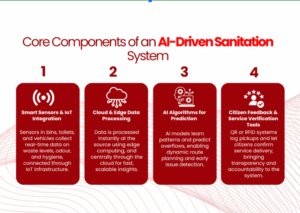

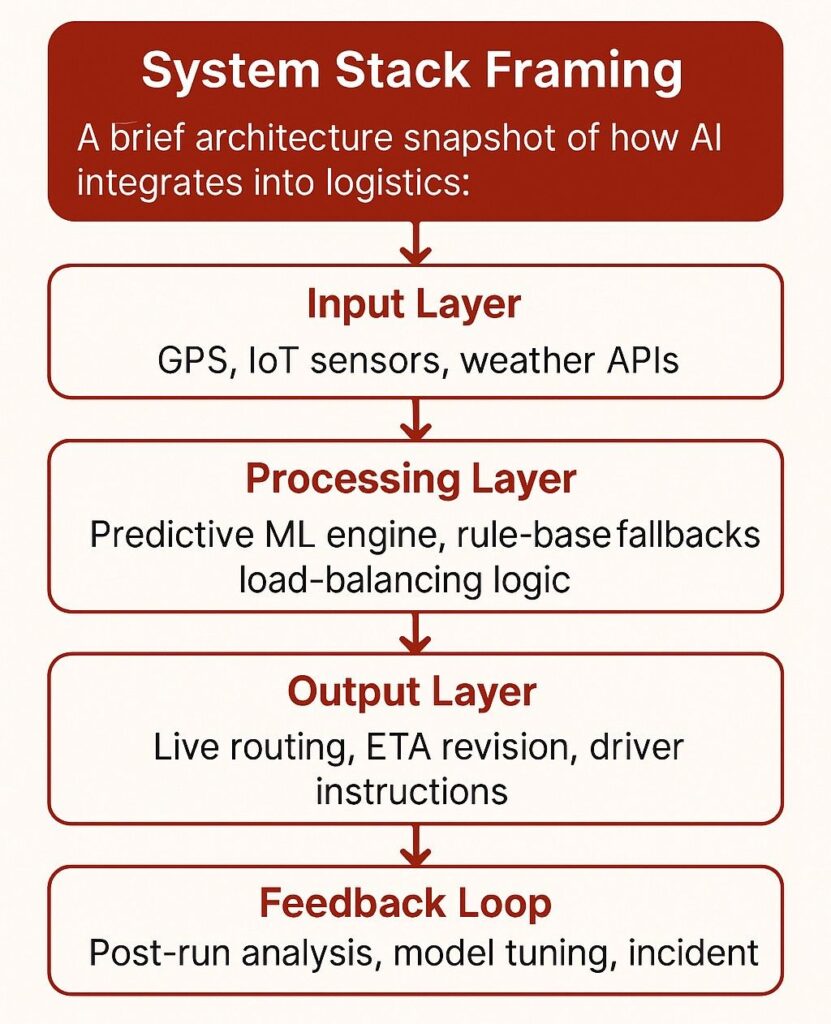

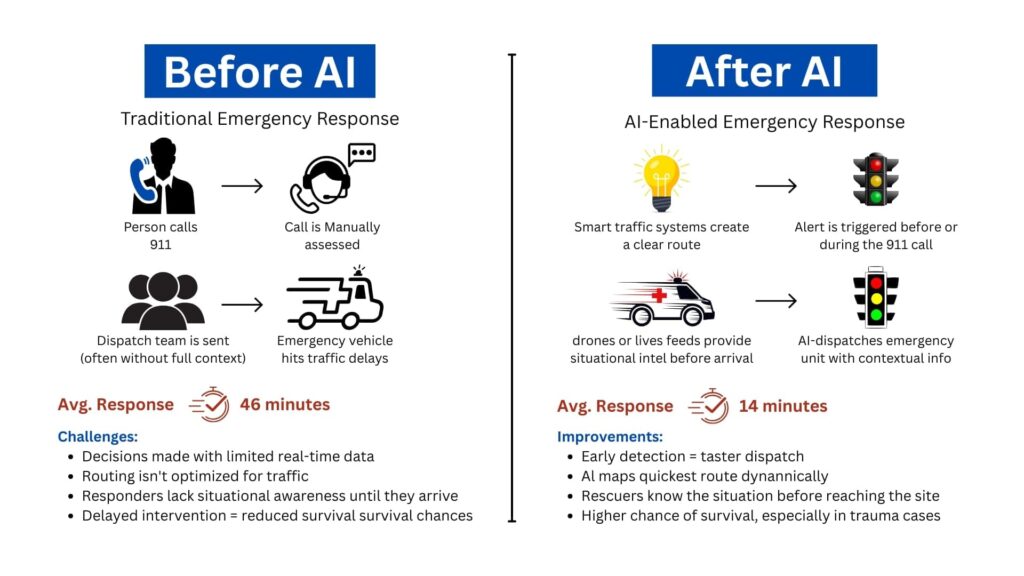

Artificial Intelligence is no longer confined to labs, pilots, or innovation teams. In 2026, AI has moved into core business workflows. Indian enterprises are increasingly using AI to make decisions faster, reduce manual effort, and improve accuracy across functions. What’s changed is not just the technology, but how comfortably teams now rely on AI outputs. Common AI-driven applications include:

-

Demand forecasting and sales prediction

-

Fraud detection and risk scoring

-

Intelligent customer support and chatbots

-

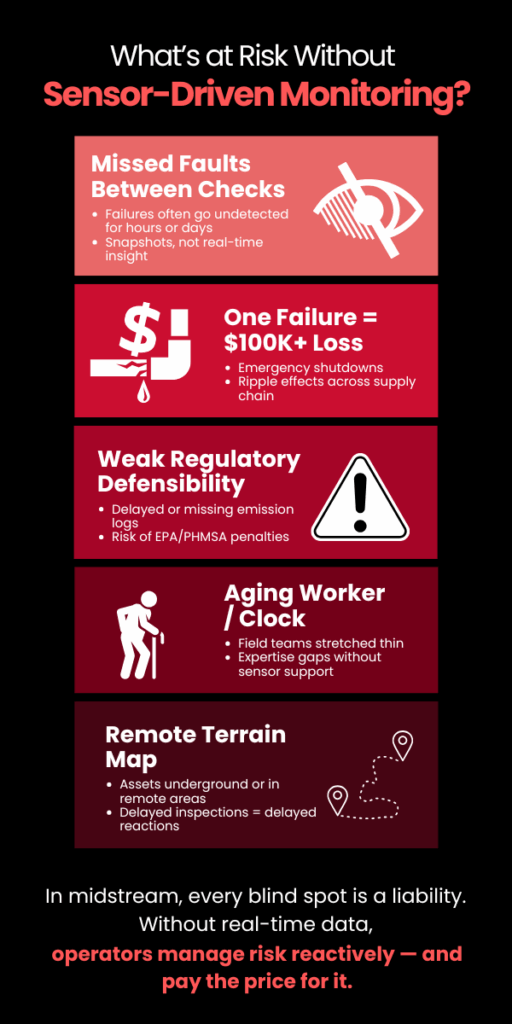

Predictive maintenance in manufacturing

-

Document processing and data extraction

AI is no longer seen as “advanced technology.” It’s becoming standard infrastructure—much like ERP systems once did.

2. Automation at Scale, Not Just Task-Level Automation

Earlier automation efforts focused on individual tasks—one report, one approval, one process. In 2026, businesses are moving toward end-to-end process automation. This shift is especially visible in Indian enterprises dealing with scale and complexity, such as BFSI, logistics, manufacturing, and government-linked organizations. Instead of automating isolated steps, companies are redesigning workflows to remove friction entirely. High-impact automation areas include:

-

Lead-to-cash processes

-

Procure-to-pay cycles

-

Customer onboarding

-

Compliance reporting

-

Incident and service request management

The goal is no longer “doing tasks faster,” but reducing dependency on manual intervention altogether.

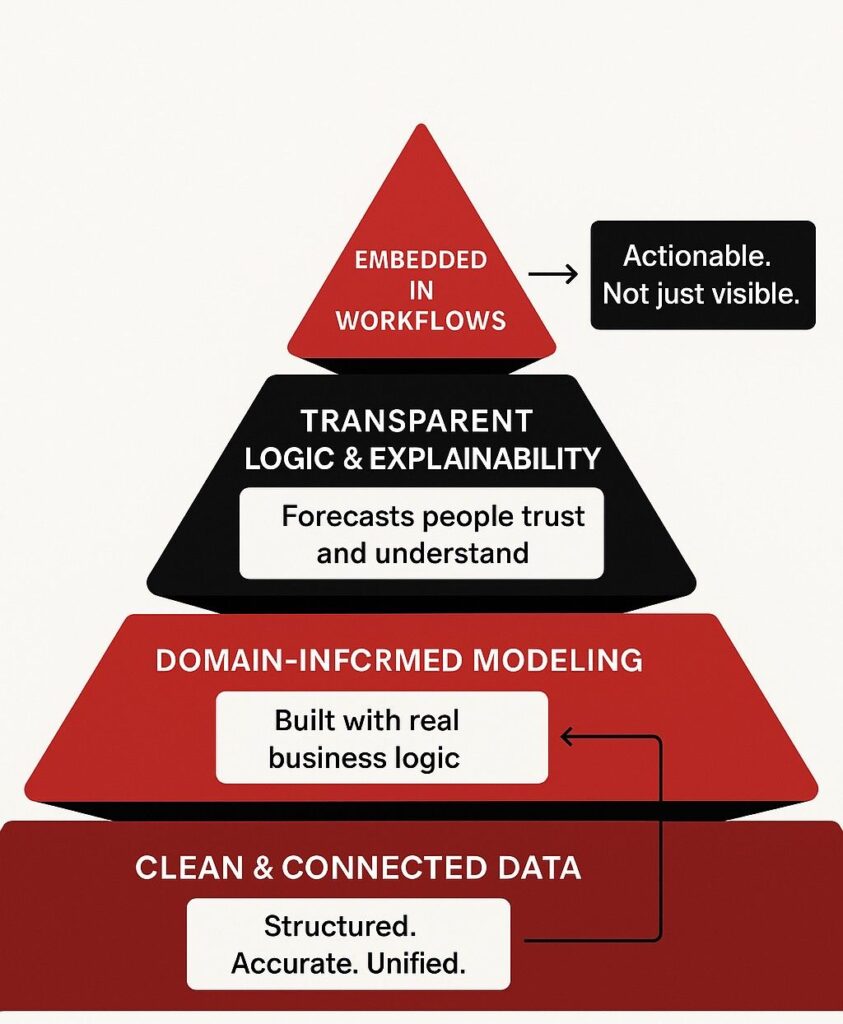

3. Data Becoming a Strategic Asset, Not Just a Reporting Tool

Most organizations collect data. Very few use it well. In 2026, Indian businesses are beginning to treat data as a strategic business asset, not just something for dashboards and monthly reviews. Leadership teams increasingly expect real-time insights, predictive signals, and scenario analysis. This shift is driven by:

-

More affordable analytics platforms

-

Cloud-based data lakes

-

Improved data governance frameworks

-

Growing pressure for faster decision-making

Instead of asking “What happened last quarter?”

Businesses are asking:

“What’s likely to happen next?”

“Where should we intervene now?”

“What decision will give us the highest return?”

This mindset change is one of the most important digital transformation trends of the decade.

4. Cloud as the Default Operating Model

Cloud adoption in India has matured. The debate is no longer “Should we move to the cloud?” but “How do we optimize cloud usage?” In 2026, cloud has become the default platform for new applications, data systems, and digital services. Hybrid and multi-cloud strategies are especially common, driven by compliance, performance, and cost considerations. Key cloud trends shaping transformation include:

-

Cloud-native application development

-

Migration of legacy workloads with modernization

-

Cost governance (FinOps) becoming critical

-

Cloud supporting AI, analytics, and automation workloads

Businesses that fail to control cloud sprawl or cost inefficiencies often lose the financial benefits they expected—making governance as important as adoption.

5. Cybersecurity Becoming a Business Risk Function

Cybersecurity is no longer just an IT responsibility. In 2026, Indian organizations increasingly treat it as a business risk and continuity issue. With rising cyber threats, stricter compliance expectations, and increased digital exposure, security decisions now involve leadership, legal, and operations teams. Key cybersecurity shifts include:

-

Zero Trust security models

-

AI-driven threat detection

-

Cloud security posture management

-

Incident response planning as a board-level concern

-

Security-by-design in digital initiatives

Digital transformation without security is no longer acceptable. Security is now embedded, not added later.

6. Industry-Specific Digital Transformation (Not One-Size-Fits-All)

One major digital transformation trend in 2026 is the move away from generic transformation frameworks. Indian businesses are realizing that industry context matters. For example:

-

Manufacturing focuses on predictive maintenance and digital twins

-

BFSI prioritizes automation, risk analytics, and compliance

-

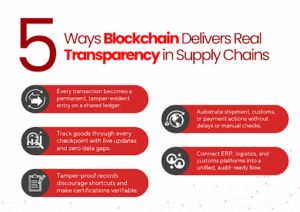

Retail emphasizes personalization and supply chain visibility

-

Healthcare invests in patient data, diagnostics, and workflow automation

-

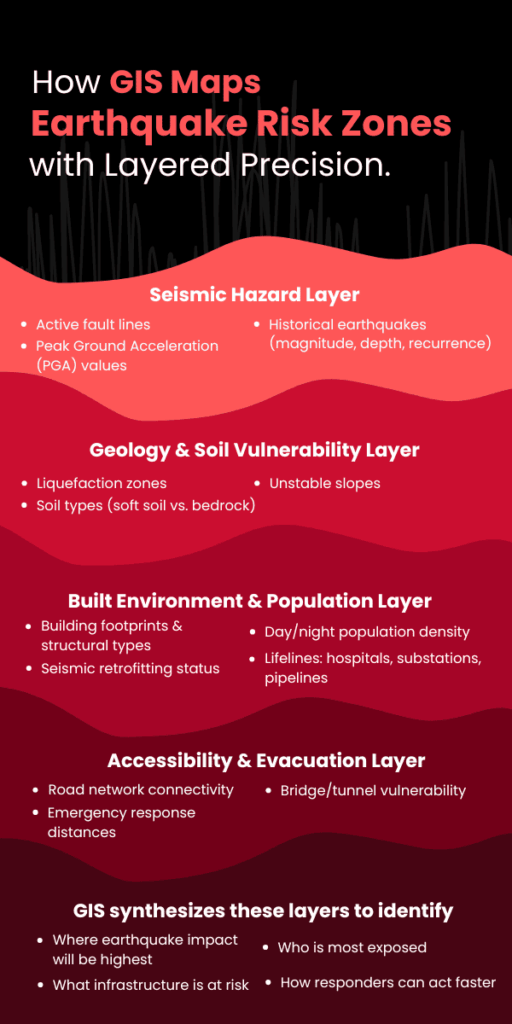

Government and urban bodies rely heavily on GIS and real-time dashboards

This industry-first approach makes transformation more practical and outcome-driven.

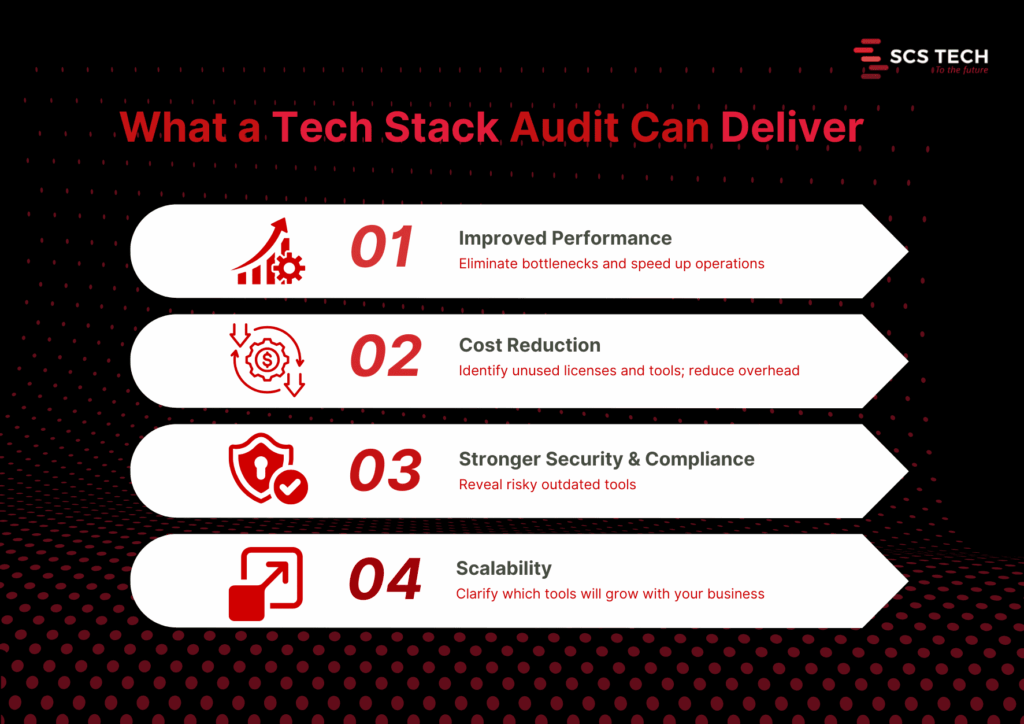

7. Integration Over Tool Proliferation

Over the last few years, many organizations adopted multiple tools—CRM, ERP, analytics platforms, automation software, and ticketing systems. In 2026, the challenge is integration. Disconnected systems slow down processes and reduce visibility. As a result, businesses are focusing on:

-

API-based integration

-

Unified dashboards

-

Centralized data layers

-

Reduced tool redundancy

The winners are not those with the most tools, but those with the most connected systems.

8. Digital Transformation Measured by ROI, Not Adoption

Perhaps the most important digital transformation trend is this: it is now judged by business value, not technology adoption. Leadership teams expect clear answers to:

-

How much cost did we reduce?

-

How much faster are we operating?

-

Did customer experience improve?

-

Did productivity increase?

-

Are risks better managed?

This shift has forced organizations to align digital initiatives directly with KPIs, revenue, efficiency, and growth goals.

What This Means for Indian Businesses

Digital transformation in 2026 is no longer about keeping up—it’s about staying relevant. Indian businesses that invest in data-driven decision-making, automate intelligently, secure digital ecosystems, integrate systems effectively, and focus on industry-specific needs are the ones positioned for sustainable growth. Those who delay or treat transformation as a side initiative will struggle to compete in a faster, more digital-first economy.

Transformation Is No Longer Optional!

The biggest shift in 2026 is not technological—it’s strategic. Digital transformation has moved from being an IT project to becoming a core business capability. Indian businesses that succeed will be those that move beyond buzzwords and focus on execution, outcomes, and continuous improvement. For organizations navigating this complexity, having the right technology partner can simplify decision-making and accelerate results. SCS Tech India helps businesses translate digital transformation trends and strategies into real-world impact—by combining analytics, automation, cloud, cybersecurity, and domain expertise into scalable, outcome-driven solutions.