Public health surveillance has always depended on delayed reporting, fragmented systems, and reactive measures. AI/ML service changes that structure entirely. Today, machine learning models can detect abnormal patterns in clinical data, media signals, and mobility trends, often before traditional systems register a threat. But building such systems means understanding how AI handles fragmented inputs, scales across regions, and turns signals into decisions.

This article maps out what that architecture looks like and how it’s already being used in real-world health systems.

What Is an AI-Powered Public Health Surveillance System?

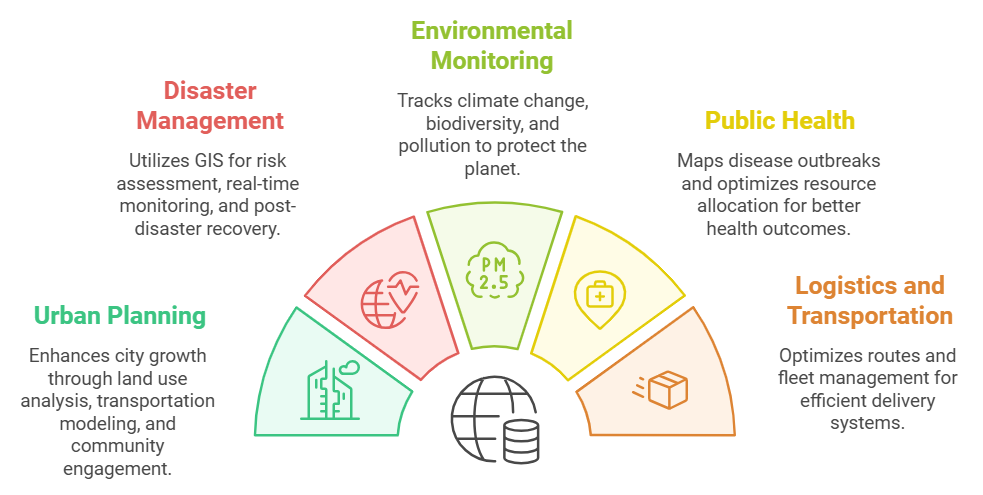

An AI-powered public health surveillance system continuously monitors, detects, and analyzes signals of disease-related events in real-time, before these events contaminate the overall population.

It does this by combining large amounts of data from multiple sources, including hospital records, laboratory results, emergency department visits, prescription trends, media articles, travel logs, and even social media content. AI/ML service models trained to identify patterns and anomalies scan these inputs constantly to flag signs of unusual health activity.

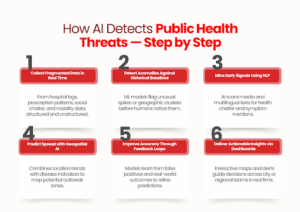

How AI Tracks Public Health Risks Before They Escalate

AI surveillance doesn’t just collect data; it actively interprets, compares, and predicts. Here’s how these systems identify early health threats before they’re officially recognized.

1. It starts with signals from fragmented data

AI surveillance pulls in structured and unstructured inputs from numerous real-time sources:

- Syndromic surveillance reports (i.e., fever, cough, and respiratory symptoms)

- Hospitalizations, electronic health records, and lab test trends

- News articles, press wires, and social media mentions

- Prescription spikes for specific medications

- Mobility data (to track potential spread patterns)

These are often weak signals, but AI picks up subtle shifts that human analysts might miss.

2. Pattern recognition models flag anomalies early

AI systems compare incoming data to historical baselines.

Once the system detects unusual increases or deviations (e.g., a sudden surge in flu-like symptoms in a given location), it creates an internal alert for the performance monitoring system.

For example, BlueDot flagged the COVID-19 cluster in Wuhan by observing abnormal cases of pneumonia in local news articles before any warnings emerged from other global sources.

3. Natural Language Processing (NLP) mines early outbreak chatter

AI reads through open-source texts in multiple languages to identify keywords, symptom mentions, and health incidents, even in informal or localized formats.

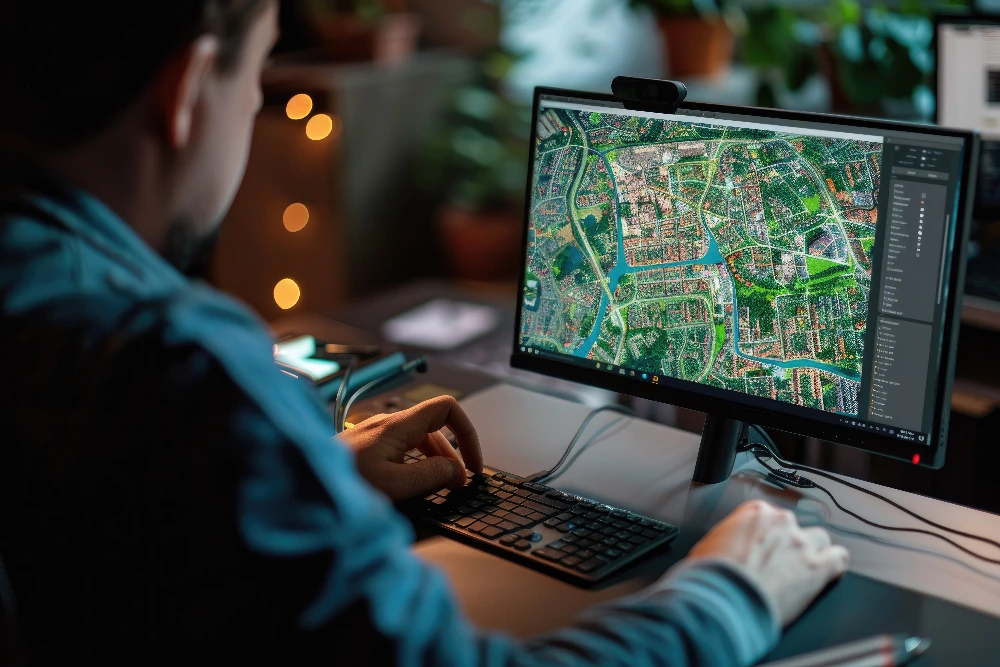

4. Geospatial AI models predict where a disease may move next

By combining infection trends with travel data and population movement, AI can forecast which regions are at risk days before cases appear.

How it helps: Public health teams can pre-position resources and activate responses in advance.

5. Machine learning models improve with feedback

Each time an outbreak is confirmed or ruled out, the system learns.

- False positives are reduced

- High-risk variables are weighted better

- Local context gets added into future predictions

This self-learning loop keeps the system sharp, even in rapidly changing conditions.

6. Dashboards convert data into early warning signals

The end result is a structured insight for decision-makers.

Dashboards visualize risk zones, suggest intervention priorities, and allow for live monitoring across regions.

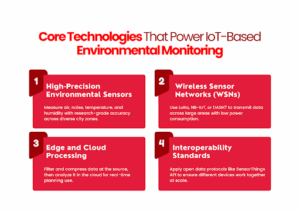

Key Components Behind a Public Health AI System

AI-powered surveillance relies on a coordinated system of tools and frameworks, not just one algorithm or platform. Every element has a distinct function in converting unprocessed data into early detection.

1. Machine Learning + Anomaly Detection

Tracks abnormal trends across millions of real-time data points (clinical, demographic, syndromic).

- Used in: India’s National Public Health Monitoring System

- Speed: Flagged unusual patterns 54× faster than traditional frameworks

2. Hybrid AI Interfaces

Designed for lab and frontline health workers to enhance data quality and reduce diagnostic errors.

- Example: Antibiogo, an AI tool that helps technicians interpret antibiotic resistance results

- Connected to: Global platforms like WHONET

3. Epidemiological Modeling

Estimates the spread, intensity, or incidence of diseases over time using historical data.

- Use case: France used ML to estimate annual diabetes rates from administrative health records

- Value: Allows for non-communicable disease surveillance, not only outbreak detection

Together, these elements create a surveillance system able to record, interpret, and respond to real-time health threats, quickly and more correctly than ever before by manual means.

How Cities and Health Bodies Are Using AI Surveillance in the Real World

AI-powered public health surveillance is already being applied in focused contexts, by cities, health departments, and evidence-based programs to identify threats sooner and respond with exactness.

The following are three real-world examples that illustrate how AI isn’t simply reviewing data; it’s optimizing frontline response.

1. Identifying TB Earlier in Disadvantaged Populations

In Nagpur, where TB is still a high-burden disease, mobile vans with AI-powered chest X-ray diagnostics are being deployed in slum communities and high-risk populations.

These devices screen automatically, identifying probable TB cases for speedy follow-up, even where on-site radiologists are unavailable.

Why it matters: Rather than waiting for patients to show up, AI is assisting cities in taking the problem to them and detecting it earlier.

2. Screening for Heart Disease at Scale

The state’s RHD “Roko” campaign uses AI-assisted digital stethoscopes and mobile echo devices to screen schoolchildren for early signs of rheumatic heart disease. This data is centrally collected and analyzed, helping detect asymptomatic cases that would otherwise go unnoticed.

Why it matters: This isn’t just a diagnosis; it’s preventive surveillance at the population level, made possible by AI’s speed and consistency.

3. Predicting COVID Hotspots with Mobility Data

During the COVID-19 outbreak, Valencia’s regional government used anonymized mobile phone data, layered with AI models, to track likely hotspots and forecast infection surges.

Why it matters: This lets public health teams move ahead of the curve, allocating resources and shaping containment policies based on forecasts, not lagging case numbers.

Each example shows slightly different application diagnostics, early screening, and outbreak modeling, but all point to one outcome: AI gives health systems the speed and visibility they need to act before things spiral.

Conclusion

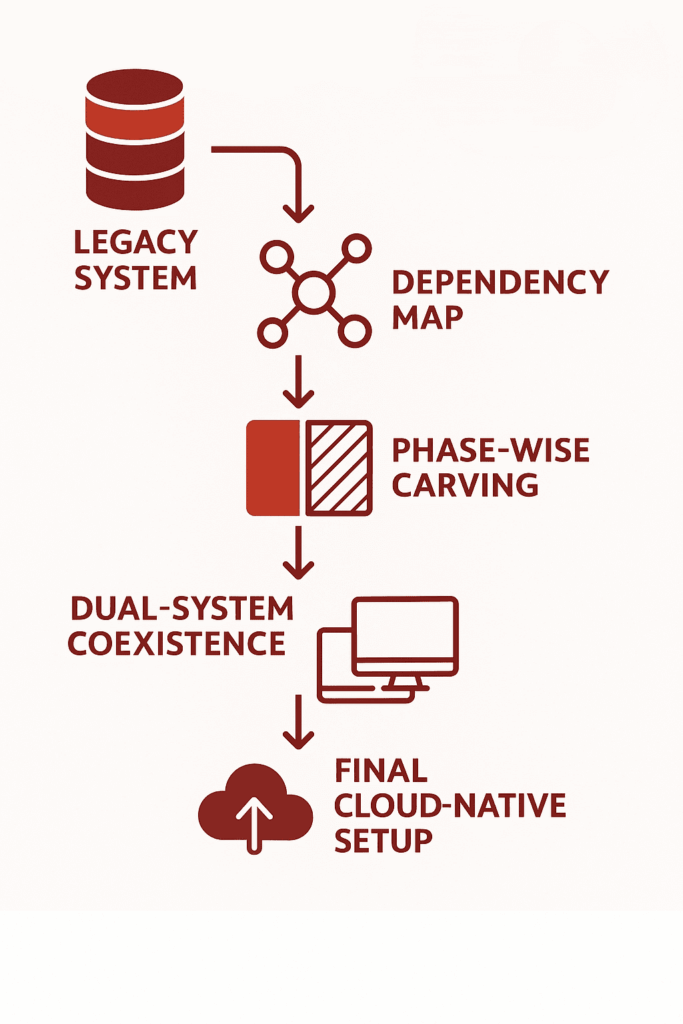

AI/ML service systems are already proving their role in early disease detection and real-time public health monitoring. But making them work at scale, across fragmented data streams, legacy infrastructure, and local constraints requires more than just models.

It takes development teams who understand how to translate epidemiological goals into robust, adaptable AI platforms.

That’s where SCS Tech fits in. We work with organizations building next-gen surveillance systems, supporting them with AI architecture, data engineering, and deployment-ready development. If you’re building in this space, we help you make it operational. Let’s talk!

FAQs

1. Can AI systems work reliably with incomplete or inconsistent health data?

Yes, as long as your architecture accounts for it. Most AI surveillance platforms today are designed with missing-data tolerance and can flag uncertainty levels in predictions. But to make them actionable, you’ll need a robust pre-processing pipeline and integration logic built around your local data reality.

2. How do you handle privacy when pulling data from public and health systems?

You don’t need to compromise on privacy to gain insight. AI platforms can operate on anonymized, aggregated datasets. With proper data governance and edge processing where needed, you can maintain compliance while still generating high-value surveillance outputs.

3. What’s the minimum infrastructure needed to start building an AI public health system?

You don’t need a national network to begin. A regional deployment with access to structured clinical data and basic NLP pipelines is enough to pilot. Once your model starts showing signal reliability, you can scale modularly, both horizontally and vertically.